The Belief-Desire-Intention (BDI) Model

TL;DR

- This article covers the foundation of the BDI model and how it transforms ai agents from simple reactive tools into proactive business partners. We look at the architectural components of beliefs, desires, and intentions while exploring real-world implementation for enterprise automation. You will learn how this model improves decision-making consistency and scalability for custom ai development projects in a high-growth tech environment.

Introduction to the BDI model and why it matters for your business

Ever wonder why some ai feels like a dumb script while others actually "get" the goal? It's usually the BDI model under the hood. (On the external concurrency of current BDI frameworks for MAS - arXiv)

Honestly, it's just a way to make software act more like us. Instead of just reacting, it uses:

- Beliefs: What the agent thinks is true about the world.

- Desires: The stuff it wants to achieve (its goals).

- Intentions: The specific plans it's actually committed to doing.

According to Wikipedia, this helps agents balance "thinking" versus "doing" so they don't freeze up.

Why this actually helps your bottom line

If you're running a business, BDI isn't just tech jargon—it's about ROI and not having your systems break.

- Reduced Operational Costs: Since these agents can handle "edge cases" without a human stepping in, you save a ton on support and manual overrides.

- Smarter Customer Bots: Instead of a chatbot that says "I don't understand," a BDI-driven bot understands its desire is to help you and can pivot its intentions if you change your mind mid-chat.

- Risk Mitigation: In autonomous systems (like warehouse robots), BDI prevents "decision paralysis," which means fewer accidents and less downtime.

It's huge for things like autonomous vehicles or retail bots that need to pivot when things change fast. (If AI eventually automates most jobs, who's going to have ...) Next, we'll dig into the techy bits.

The Three Pillars: Beliefs Desires and Intentions

Ever feel like your software is just a fancy "if-then" machine that breaks the second things get messy? That's cause most systems don't actually have a "POV" on the world around them.

Beliefs are basically what the agent thinks is happening. But here is the kicker—we call them "beliefs" and not "facts" because the world is unpredictable. As Sara Zuppiroli et al. (2025) explain in their research on BDI ontologies, these represent the informational state that bridges the gap between raw data and actual reasoning.

- Uncertainty: A healthcare bot might believe a patient is stable based on sensors, even if the sensor is slightly glitchy.

- Updates: When new data hits, the agent runs a "belief revision function" to swap out old info for new.

Desires are just the "wish list"—all the things the agent wants to do. Intentions are where the rubber meets the road. According to the BDI model discussed by Aniket Kumar (2025), an intention is a desire the agent has actually committed to.

One thing to keep in mind is that agents usually rely on a "static plan library." This means they can only do what they're programmed for—they don't really "learn" new tricks on their own if the plan fails.

In finance, a bot might desire to buy ten different stocks but only forms an intention to buy one because of budget limits. This commitment stops the ai from "decision paralysis" by narrowing down what it needs to focus on.

Next up, we're gonna look at the mechanics of how these agents actually "think" through a loop.

Implementing BDI in Modern ai Workflows

Building these agents isn't just about writing code; it's about giving them a "brain" that doesn't crack under pressure. Most companies use the BDI model to bridge the gap between simple automation and actual reasoning. (Integrating Belief-Desire-Intention agents with large language ...)

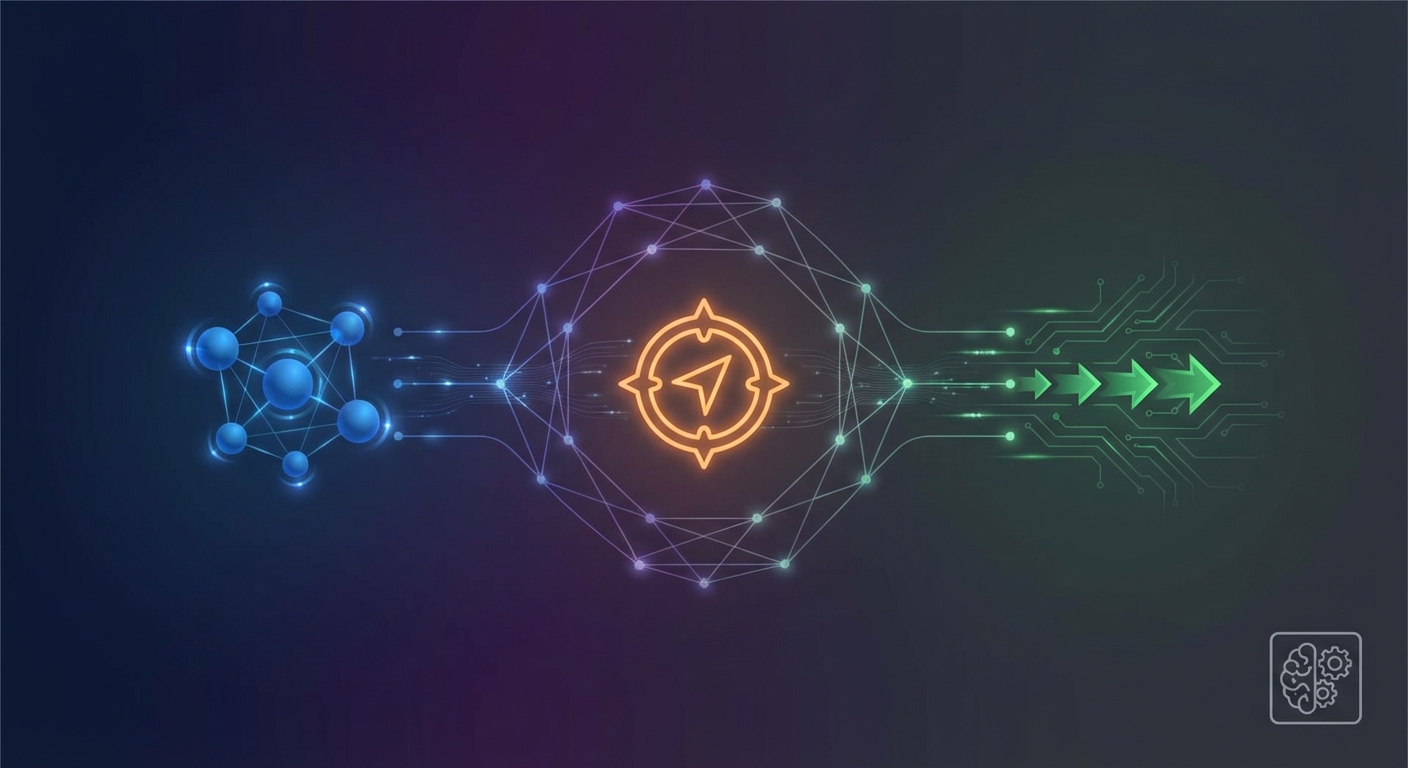

To actually start building this, developers usually use specific BDI frameworks like Jason (which uses a language called AgentSpeak), Jadex for Java-based apps, or LightBDI for more lightweight stuff. The workflow usually looks like this:

- Belief Revision: Agents constantly update their "world view" based on sensor data or apis.

- Option Generation: They look at what they could do (desires) versus what they actually know (beliefs).

- Commitment: The agent picks a plan and sticks to it until it either works or becomes impossible.

According to a 2020 presentation by Alaa Khamis for the IEEE, this model is a "matter of weighing conflicting considerations" to decide on the best conduct. It’s why a delivery bot doesn't just stop when it sees a puddle—it recalculates.

In robotics, this means a bot might desire to move fast but intends to slow down because its beliefs say the floor is slippery. Next, we'll look at the logic behind the interpreter cycle.

The BDI Interpreter Cycle and Practical Reasoning

Ever wonder how a bot actually "thinks" its way through a mess? It's not just a big list of rules; it's a loop that never stops.

The BDI interpreter cycle is basically the heart beat of the agent. It’s a sense-decide-act loop that keeps the ai from getting stuck on a plan that doesn't work anymore. In agent theory, we call Beliefs, Desires, and Intentions "mental attitudes"—basically the internal state that drives the bot's behavior.

- Option Generation: The agent looks at what's happening (events) and figures out what it could do based on its goals.

- Deliberation: It filters those options—basically a "reality check"—to see which ones it should actually commit to.

- Maintenance: If a goal becomes impossible, like a retail bot trying to ship an out-of-stock item, it just drops that "attitude" (the intention) to save memory and move on.

Here is a quick look at how the logic flows in the interpreter:

In a finance app, the bot might desire to trade a stock, but its intentions change if the api says the market just crashed. It’s all about being flexible.

While the cycle provides a robust framework, applying it to complex, real-world scenarios reveals several limitations.

Challenges and the Future of agent Architecture

BDI is cool but it ain't perfect. The biggest headache? Classic agents can't really "learn" from their mistakes because they are limited by their pre-defined plan libraries. If the world changes in a way the developer didn't expect, they might keep trying a broken strategy until you manually fix the code.

- Neuro-symbolic ai: We're starting to see folks mix BDI logic with LLMs to get the best of both worlds.

- Lookahead issues: Most systems don't actually plan far ahead, which is risky in high-stakes spots like surgery or fast-paced retail.